You’ve got yourself a website, you’ve forked out money to get it built (or built it yourself – go you!) and then you get told that you’ll be paying an ongoing hosting fee for that website. This is where things get interesting as to what that includes, as it can leave you with surprises down the track if you go for the cheapest hosting option available to you, or if you find out later that you are getting over-charged. So we thought we’d break down what we do at Webmad, so you can see where your hard earned money is being spent.

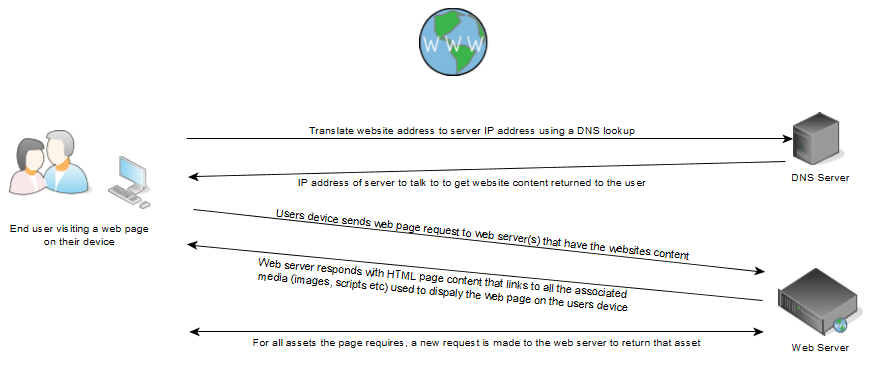

The first part of website hosting is, you’ve got to have a server to host the website. Out on the internet, all websites are hosted somewhere – there will be one, or many, computers, powered on 24/7, waiting to respond with the right content at the right time, at your audiences request. If the server that serves your website isn’t on, your website won’t respond when requested, and your website will appear down. Not a great look.

But not all website hosting servers are made and configured equal. In order to maximise their revenue, some hosts cram as many websites as they can onto a single server. This is the same as running hundreds of applications on your home computer – they all just end up going slowly, if they respond at all. The more the websites on that server are under high demand, the slower all the sites on the server are going to perform. There are tools like Cloudlinux which can minimise the impact of this on a server, but in general, the more sites on a shared hosting server, the worse the performance of your site will be, even if the servers are massive.

Another way of maximising revenue is to use older or lower spec server hardware, or extend the usage life of existing hardware – the less you have to spend to replace hardware, the more revenue you make. While this can work, especially if the host is really only babysitting your site for you, and not actively invested in what you are doing, it just means that your site will not keep up with you competitors in terms of website page speed, and your website can get penalised by search engines like google for not performing well for the end user.

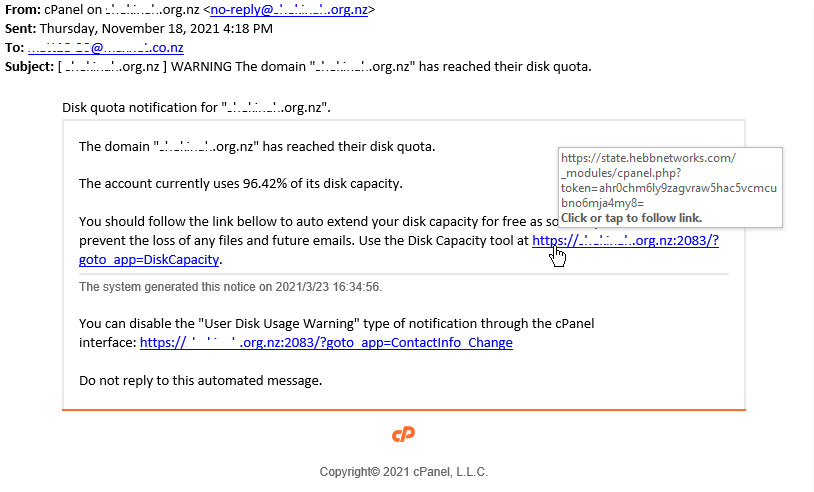

The worst cost saving method we have seen from big name web hosts is to either not have any, or very limited website / hosting server backups. Sadly this is commonplace for the cheapest of the web hosts, with them claiming that its your website, so you should have a backup of it. This is very hard to do with e-commerce or similar sites that update regularly, and may have client submitted information that needs backed up. The last thing you want if your website gets infected, or if the hosting provider has a meltdown (certainly has happened in the past, even in fairly recent history from big name hosting providers) and loses your website, not being able to restore it to a known good working backup.

What we do at Webmad is a little different to many of the web hosting companies out there, and we stand by what we do.

Firstly, we run cloud based servers. What does that mean? Well – it means that our infrastructure is based on hardware provided by large internationally renowned datacenter providers, on proven systems that either self heal, or instantly move our hosting servers onto hardware that isn’t in a failed state. Downtime is minimised to only what is absolutely necessary to deliver you the best experience (ie install of software and security updates etc), and we are always on the latest and greatest hardware.

We also take backups of your website, and our entire servers, every 12 hours. Not enough? We can increase that to every 15 minutes if you need – a complete snapshot of your hosting environment – server specs, database state, all that fun stuff. And we keep that info for ages too (2 weeks for high definition backups, moving to nightly for 3 months, then monthly etc etc), so if you need to audit anything, or need as little loss as possible if something were to go pear shaped, we’ve got you covered.

Webmad have a number of shared hosting servers that our clients websites are based apon, all under the same levels of protection and running high spec hardware. We limit the number and type of sites any one server can host, so that our servers have the greatest possible uptime and greatest protection against high usage spikes impacting on other users. We run hosting and monitoring software that ensures your sites are online all the time, and if there are any issues, we can react fast to rectify the situation.

And if the worst was to happen and members of our team were taken out by a bus (or however you creatively want to dispatch of us – we don’t encourage it though), there are at least 5 of the team who can keep the lights on and your hosting safe. And our systems are set up in such a way that external contractors could also be brought in to maintain status quo as needed, so we have redundancy at all levels.

That’s all well and good, and I’m sure that others in the web hosting game can replicate this. But what really sets us apart, and we are most proud of, is that we are a local team, based in Christchurch, and we’ve been doing this as Webmad for over 10 years (some in the team, for more than 20 years). You can walk in off the street, and say hi or grab a coffee with us. Ask us all the curly questions in person, or over the phone. We are accountable. We are a team that wants to partner with you and make your endeavours as successful as we can. We celebrate our clients successes, and respond as needed if they have emergencies we can help with. We aren’t afraid to answer the phone after hours. And we’d love to be working with you, too.